See, the quickest way to get AI banned is for it to start telling the truth about those in power.

They’ll just switch to Grok, which will encourage them to commit even more war crimes. Currently it’s based on Google’s Gemini

The most infuriating part is they’re so bad at this, yet they’re still getting away with it. I mean they’re just So. Dumb. and yet…

deleted by creator

They are mean. Look at MTG, Democrats have been mad at her for YEARS. When Republicans were mad at her for two DAYS she HAD to get security protection. What does that tell you?

Magic The Gathering

Violence gets results

deleted by creator

What…?

deleted by creator

wat

I vaguely remember a movie where the government makes an ai intended to defend the usa, and it starts killing off politicians because it saw them as the greatest threat to national security

Eagle Eye

That’s the one!

The AIs we want vs the AIs we got. :(

Well… Was it wrong?

The precipitating event was a military strike that the AI said to not do, which ended up killing exclusively civilians, so I think it may have had the right idea 😅

But no, we have the shitty timeline where AI tells you that elon musk is the smartest person on the planet.

I mean, it wasn’t so keen on glazing him before its… 3(?) lobotomies XD

Grok is probably the only one that might do that, and I don’t think Musk has been able to manipulate enough to make that happen.

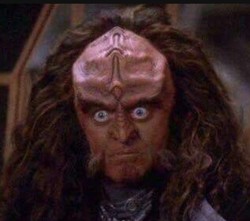

Secretary of war (crimes), Pete Hegseth

*ssecretary

Kegsbreath

The Pentagon AI immediately notified the DOJ AI and Hegseth’s avatar was imprisoned for war crimes.

Can media outlets please, please, please start to use the Benny Hill theme whenever they report on something this administration does?

For anyone who doesn’t know it:

https://www.youtube.com/watch?v=MK6TXMsvgQgA LLM advisor that takes REAL CASES AND LAWS NOT ONES IT MADE UP!!! and sorted through them to advise on legal direction THAT CAN THEN BE VERIFIED BY LEGAL PROFESSIONALS WITH HUMAN EYES!!! might not be too bad of an idea. But we’re really just remaking search engines but worse.

You may already know that, but just to make it clear for other readers: It is impossible for an LLM to behave like described. What an LLM algorithm does is generate stuff, It does not search, It does not sort, It only make stuff up. There is not that can be done about it, because LLM is a specific type of algorithm, and that is what the program do. Sure you can train it with good quality data and only real cases and such, but it will still make stuff up based on mixing all the training data together. The same mechanism that make it “find” relationships between the data it is trained on is the one that will generate nonsense.

Whole lot of unsupported assumptions and falsehoods here.

Stand alone model predicts tokens. LLMs retrieve real documents, rank/filter results and use search engines. Anyone who has used these things would know that it’s not just “making stuff up”.

It both searches and sorts.

In short, you have no fucking idea what you’re talking about.

Search engine with a summary written by an intern who is not familiar with the content.

So much better than that. Always amusing how much people will distort or ignore fact if it “feels right”.